artificial intelligence

Page: 8

MashApp, a music remixing app featuring hit songs from Doja Cat, Ed Sheeran, Britney Spears and more, launched in the Apple app store Tuesday (Feb. 18). At launch, the AI-powered app has already worked out licenses for select tracks from Universal Music Group (UMG) and Warner Music Group’s (WMG’s) publishing and recorded music catalogs, Sony Music’s recorded music catalog, and Kobalt’s publishing catalog.

The app, founded by former Spotify executive Ian Henderson, features a TikTok-like vertical feed for users to share the remixes they make with the app. Among the tools it offers to users, MashApp boasts the ability to combine and mix multiple songs into each other and to speed up, slow down or separate out a song into its individual stems (the individual instrument tracks in a master recording).

Trending on Billboard

News of MashApp’s launch arrives just days after Bloomberg reported that Spotify is planning to launch a superfan streaming tier that includes extra features, like high-fidelity audio and in-app remixing tools.

MashApp, however, is the latest standalone app to use cutting edge technology, like AI, to allow users to morph and manipulate their favorite songs. Last year, Hook, another AI remix app, announced its licensing deal with Downtown. Other companies, like Lifescore, Reactional Music and Minibeats, have also played with the idea of allowing users more control over the music they listen to in recent years. With these tools, fans can turn static recordings into dynamic works that evolve over time based on a listener’s situation, whether that’s having the music respond to actions in a video game in real time or while driving a car. Even Ye (formerly Kanye West) played with this concept during the rollout of his album Donda 2, which was only available via a hardware device, called a Stem Player, that let listeners control the mix of the album.

MashApp is available for free or via a paid subscription for ad-free listening and additional features. Though mashups and remixes of hit songs are popular soundtracks for short-form content on TikTok and Instagram Reels, MashApp creations must be enjoyed within the app and are only available for personal use.

“MashApp’s mission is to bring the joy of playing with music creation to non-musicians, to let people play with their favorite music, as they have long done through DJing, mix tapes, mashups, and karaoke,” explained MashApp CEO/founder Henderson in a statement. “We want this new creative play to be a great experience for fans, but also for artists. This requires close partnerships with record labels and music publishers, and we’re excited that our partners have embraced our vision.”

Mark Piibe, executive vp of global business development & digital strategy at Sony Music, added: “We are pleased to be working with MashApp to help fans go deeper in how they engage with their favorite music through a new personalization and creation experience that appropriately values the work of our artists. This partnership furthers Sony Music’s ongoing commitment to supporting innovation in the marketplace by collaborating with developers of quality products that see opportunity in solutions that respect the rights of professional creators.”

“UMG always seeks to support innovation in the digital music ecosystem. MashApp introduces another evolution of the streaming experience for users by combining the creativity of DJ apps, with the accessibility that streaming offers,” said Nadir Contractor, senior vp of digital strategy & business development at UMG. “Within MashApp, users can unlock their own creative expression to curate, play and enjoy in real-time musical mashups from their favorite artists and songs, while respecting and supporting artist rights.”

“Our commitment to championing the rights of our artists and songwriters is at the core of everything we do,” said John Rees, senior vp of strategy & business development at WMG. “This partnership with MashApp builds on this mission–delivering a licensed, innovative platform that not only offers fans an exciting way to engage with music but also safeguards the work of the artists and songwriters who make it all possible.”

Lastly, Bob Bruderman, chief digital officer at Kobalt Music, added, “Kobalt has been a strong supporter of new companies that allow fans to express their creativity and engage with music they love. It was immediately clear that MashApp had a unique vision that opened a new experience for music fans on a well-executed platform, simultaneously respecting copyright. We look forward to a long partnership with MashApp.”

This analysis is part of Billboard’s music technology newsletter Machine Learnings. Sign up for Machine Learnings, and other Billboard newsletters for free here.

Have you heard about our lord and savior, Shrimp Jesus?

Last year, a viral photo of Jesus made out of shrimp went viral on Facebook — and while it might seem obvious to you and me that generative AI was behind this bizarre combination, plenty of boomers still thought it was real.

Trending on Billboard

Bizarre AI images like these have become part of an exponentially growing problem on social media sites, where they are rarely labeled as AI and are so eye grabbing that they draw the attention of users, and the algorithm along with them. That means less time and space for the posts from friends, family and human creators that you want to see on your feed. Of course, AI makes some valuable creations, too, but let’s be honest, how many images of crustacean-encrusted Jesus are really necessary?

This has led to a term called the “Dead Internet Theory” — the idea that AI-generated material will eventually flood the internet so thoroughly that nothing human can be found. And guess what? The same so-called “AI Slop” phenomenon is growing fast in the music business, too, as quickly-generated AI songs flood DSPs. (Dead Streamer Theory? Ha. Ha.) According to CISAC and PMP, this could put 24% of music creators’ revenues at risk by 2028 — so it seems like the right time for streaming services to create policies around AI material. But exactly how they should take action remains unclear.

In January, French streaming service Deezer took its first step toward a solution by launching an AI detection tool that will flag whatever it deems fully AI generated, tag it as such and remove it from algorithmic recommendations. Surprisingly, the company claims the tool found that about 10% of the tracks uploaded to its service every day are fully AI generated.

I thought Deezer’s announcement sounded like a great solution: AI music can remain for those who want to listen to it, can still earn royalties, but won’t be pushed in users’ faces, giving human-made content a little head start. I wondered why other companies hadn’t also followed suit. After speaking to multiple AI experts, however, it seems many of today’s AI detection tools generally still leave something to be desired. “There’s a lot of false positives,” one AI expert, who has tested out a variety of detectors on the market, says.

The fear for some streamers is that a bad AI detection tool could open up the possibility of human-made songs getting accidentally caught up in a whirlwind of AI issues, and become a huge headache for the staff who would have to review the inevitable complaints from users. And really, when you get down to it, how can the naked ear definitively tell the difference between human-generated and AI-generated music?

This is not to say that Deezer’s proprietary AI music detector isn’t great — it sounds like a step in the right direction — but the newness and skepticism that surrounds this AI detection technology is clearly a reason why other streaming services have been reluctant to try it themselves.

Still, protecting against the negative use-cases of AI music, like spamming, streaming fraud and deepfaking, are a focus for many streaming services today, even though almost all of the policies in place to date are not specific to AI.

It’s also too soon to tell what the appetite is for AI music. As long as the song is good, will it really matter where it came from? It’s possible this is a moment that we’ll look back on with a laugh. Maybe future generations won’t discriminate between fully AI, partially AI or fully human works. A good song is a good song.

But we aren’t there yet. The US Copyright Office just issued a new directive affirming that fully AI generated works are ineligible for copyright protection. For streaming services, this technically means, like all other public domain works, that the service doesn’t need to pay royalties on it. But so far, most platforms have continued to just pay out on anything that’s up on the site — copyright protected or not.

Except for SoundCloud, a platform that’s always marched to the beat of its own drum. It has a policy which “prohibit[s] the monetization of songs and content that are exclusively generated through AI, encouraging creators to use AI as a tool rather than a replacement of human creation,” a company spokesperson says.

In general, most streaming services do not have specific policies, but Spotify, YouTube Music and others have implemented procedures for users to report impersonations of likenesses and voices, a major risk posed by (but not unique to) AI. This closely resembles the method for requesting a takedown on the grounds of copyright infringement — but it has limits.

Takedowns for copyright infringement are required by law, but some streamers voluntarily offer rights holders takedowns for the impersonation of one’s voice or likeness. To date, there is still no federal protection for these so-called “publicity rights,” so platforms are largely doing these takedowns as a show of goodwill.

YouTube Music has focused more than perhaps any other streaming service on curbing deepfake impersonations. According to a company blog post, YouTube has developed “new synthetic-singing identification technology within Content ID that will allow partners to automatically detect and manage AI-generated content on YouTube that simulates their singing voices,” adding another layer of defense for rights holders who are already kept busy policing their own copyrights across the internet.

Another concern with the proliferation of AI music on streaming services is that it can enable streaming fraud. In September, federal prosecutors indicted a North Carolina musician for allegedly using AI to create “hundreds of thousands” of songs and then using the AI tracks to earn more than $10 million in fraudulent streaming royalties. By spreading out fake streams over a large number of tracks, quickly made by AI, fraudsters can more easily evade detection.

Spotify is working on that. Whether the songs are AI or human-made, the streamer now has gates to prevent spamming the platform with massive amounts of uploads. It’s not AI-specific, but it’s a policy that impacts the bad actors who use AI for this purpose.

SoundCloud also has a solution: The service believes its fan-powered royalties system also reduces fraud. “Fan-powered royalties tie royalties directly to the contributions made by real listeners,” a company blog post reads. “Fan-powered royalties are attributable only to listeners’ subscription revenue and ads consumed, then distributed among only the artists listeners streamed that month. No pooled royalties means bots have little influence, which leads to more money being paid out on legitimate fan activity.” Again, not AI-specific, but it will have an impact on AI uploaders with bad motives.

So, what’s next? Continuing to develop better AI detection and attribution tools, anticipating future issues with AI — like AI agents employed for streaming fraud operations — and fighting for better publicity rights protections. It’s a thorny situation, and we haven’t even gotten into the philosophical debate of defining the line between fully AI generated and partially AI generated songs. But one thing is certain — this will continue to pose challenges to the streaming status quo for years to come.

An Instagram user recently used artificial intelligence to make it sound like Rihanna said things that never actually came out of her mouth — and the star isn’t happy. On Wednesday (Feb. 5), the Fenty mogul jumped in the comments on a video doctored to sound like she was listing out her “most expensive purchases” […]

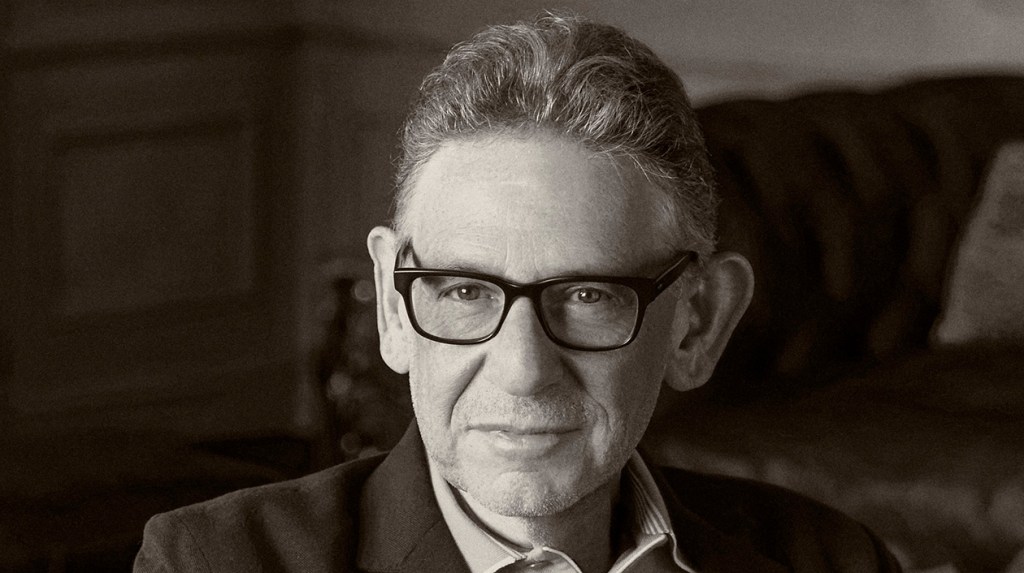

Universal Music Group (UMG) chairman/CEO Lucian Grainge released his annual New Year’s memo to staff on Monday (Feb. 3), about a month later than usual owing to the wildfires that broke out in Los Angeles in early January.

In the 3,113-word letter, Grainge retreaded much of the same ground covered in his 2023 and 2024 New Year’s addresses and his presentation at the company’s Capital Markets Day in September, including mentions of “Streaming 2.0,” “responsible AI,” “artist-centric” approaches, “super fans” and more.

Grainge began the letter by noting UMG’s accomplishments over the last year, including breaking new artists like Sabrina Carpenter and Chappell Roan, working with Taylor Swift — the most streamed artist globally on Spotify, Amazon and Deezer — and Apple Music’s Artist of the Year Billie Eilish, adding that “achievements like these don’t just happen.”

Trending on Billboard

“Those achievements were greatly assisted by our continuing self-reinvention, reshaping our organizational structure,” wrote Grainge, referring to the widespread restructuring of UMG’s recorded music division in 2024 that led to layoffs. “Within months we were operating with greater agility and efficiency. We then saw something exceptional take place.”

As the largest music company in the world enters 2025, Grainge reminded his staff that UMG is still a “relative minnow” compared to the trillion-dollar tech companies it calls its partners. Still, he noted that UMG was successful in ushering in its “artist-centric strategy,” a term he introduced two years ago to describe UMG’s efforts to better monetize music and to limit gaming of the systems.

“Not only do we want to ensure that artists are protected and rewarded, but we’re also going after bad actors who are actively engaged in nefarious behavior such as large-scale copyright infringement,” he wrote. In the last year, UMG forged new deals with TikTok, Spotify and Amazon to aid in those efforts. Also in 2024, UMG sued AI companies Suno and Udio and music distributor Believe to prevent what Grainge called “large-scale copyright infringement.”

Grainge then detailed his plans to influence and set the ground rules for “Streaming 2.0” — or “the next era of streaming” — with UMG’s partners. He pointed back to new agreements with Amazon and Spotify as major wins, adding, “We expect that similar agreements with other major platforms will be coming in the months ahead.”

He also discussed the company’s goal of finding ways to “accelerat[e] our direct to consumer and superfan strategy,” building on past moves like its strategic partnership and investment in NTWRK and Complex. “This year will see us expanding our product offerings to fans, as we continue to redefine the ‘merch’ category and create superfan collectibles and experiences,” Grainge wrote.

He also noted the company’s focus to “aggressively grow our presence in high potential markets,” whether that’s through A&R, artist and label service agreements or mergers and acquisitions. In the last year, UMG has managed to work towards this goal by announcing that Virgin had entered an agreement to acquire Downtown Music Holdings, purchasing the remaining share of [PIAS] and partnering with Mavin Global in Nigeria.

“The reason so many independent music entrepreneurs actively seek to partner with UMG when they have more alternatives than ever before is that we provide what they’re seeking… After all, we’re not a financial institution that views music as an ‘asset,’” he wrote. “And we’re not an aggregator that views music as ‘content.’ We are a music company built by visionary music entrepreneurs. For us, music is a vital — perhaps the vital — art form.”

Grainge ended on a high note, writing, “Let me leave you with this: Some will try to disrupt our business or criticize us. That we know. It comes with being in the most competitive market that music and music-based entertainment has ever seen, and it comes with being the industry’s leader and primary driving force. But our vision and our ability to consistently execute gives us the momentum to continue to succeed and grow.”

Read Grainge’s full New Year’s note to staff below.

Dear Colleagues:

When I wrote my first letter about the L.A. fires, I said that my annual New Year’s note would have to come later than usual. And so here it is…

Last night’s Grammy awards served as a perfect metaphor for our company’s performance in 2024—breaking new artists and taking our superstars to new heights. In fact, last night, UMG artists and songwriters brought home more Grammys than ever before in our history. You can read more about that here.

Thanks to your day-in, day-out dedication and hard work, we accomplished so much together in 2024 and are positioning ourselves for another great year of success. I’ll sum up some of UMG’s stunning achievements last year and give you a glimpse of what we plan for this year. Our company’s fundamental building block is artist developmentand in 2024, investment in new talent continued to produce spectacular results around the world. Consider the following facts: that UMG broke the two biggest artists in the world last year in Sabrina Carpenter and Chappell Roan; Taylor Swift was the most streamed globally on Spotify, Amazon and Deezer; and Apple Music named Billie Eilish its Artist of the Year. And that a UMG recording artist who is also signed to UMPG as a songwriter had the No. 1 song globally on the year-end lists for both Apple Music (Kendrick Lamar) and Spotify (Sabrina Carpenter). Or that UMG had four of the Top 5 artists globally on Spotify with Taylor Swift (No. 1), The Weeknd, Drake and Billie Eilish; eight of the Top 10 albums (Taylor Swift’s The Tortured Poets Department at No. 1); five of the Top 10 songs (Sabrina Carpenter’s “Espresso” at No. 1), and six of the 10 Most Viral songs (Lady Gaga and Bruno Mars’ “Die With A Smile” at No. 1). Or consider that in the U.S., UMG had all Top 3 label groups according to Billboard (Republic, Interscope and Universal Music Enterprises) not to mention four of the Top 5 artists and eight of the Top 10 albums, including all of the Top 5 – Taylor Swift (No. 1 and No. 2), Morgan Wallen, Noah Kahan and Drake. And on YouTube, six of the Top 10 songs (Kendrick Lamar at No. 1) and two spots on the Trending Topics Top 10 across all content categories in 2024 (Kendrick Lamar and Sabrina Carpenter). You can read more of our remarkable achievements around the world at the end of this note, but as you can already see, in 2024, our momentum only grew. Also, these were achievements not only by a few superstars, but also by dozens of artists from around the world—both developing and established—performing in multiple genres, styles and languages. Achievements like these don’t just happen. They are the culmination of maintaining a clear vision of who we are, what we do and where we’re going, then executing on that vision, maintaining momentum and, of course, at the heart of it all, having some absolutely incredible music to work with. And last year those achievements were greatly assisted by our continuing self-reinvention, reshaping our organizational structure, re-building our teams and refining our strategy. A vision which boldly and, when necessary, quickly adapts to an ever-changing world. For example, in early 2024 we executed on our vision to realign our U.S. label structure, and within months we were operating with greater agility and efficiency. We then saw something exceptional take place: UMG had its best U.S. performance in six years, according to Luminate. I’m confident that our realignment will yield still further momentum around the world and that the achievements of our artists and songwriters—as well as UMG’s success—will reach new heights.In 2024, we continued to lead the media industry in our embrace and advancement of “Responsible AI.” Three recent examples of that initiative include our agreements with SoundLabs, ProRata and KLAY—companies that are taking unique approaches to the rapidly evolving AI space through new technologies that provide accurate attribution and tools to empower and compensate artists.Our leadership also includes our commitment to the enactment of Responsible AI public policies, fighting back against so-called text and data mining copyright exceptions and other misguided and ill-intentioned proposals that would enable what I will euphemistically call the unauthorized exploitation of creators’ work. Instead, we will work towards legislative “guardrails” to ensure the healthy evolution and growth of AI that mutually serves creators, consumers and responsibly innovative technology players.In my note last year, I said that 2024 would see us once again attracting the brightest entrepreneurs, expanding our existing relationships with other such talents and investing more resources into providing a full suite of artist services businesses to independent labels around the world. And we did exactly that. We acquired the remaining share of [PIAS] two years after taking an initial stake in the company and brought its highly respected co-founder Kenny Gates into our family. And we grew our geographic footprint. One example: our partnering with and investing in Mavin Global, whose founders Don Jazzy and Tega Oghenejobo continue to lead that company as well as, going forward, all of UMG’s business in Nigeria.Just last month, Virgin announced it entered into an agreement to acquire Downtown Music Holdings, which includes FUGA, Downtown Artist & Label Services, Curve Royalties, CD Baby, Downtown Music Publishing and Songtrust.The reason so many independent music entrepreneurs actively seek to partner with UMG when they have more alternatives than ever before is that we provide what they’re seeking: the most innovate creatives and finest resources that will advance the careers of their artists and achieve their financial goals within a culture that respects artists and their music. After all, we’re not a financial institution that views music as an “asset.” And we’re not an aggregator that views music as “content.” We are a music company built by visionary music entrepreneurs. For us, music is a vital—perhaps the vital—art form. Artists and the music they create are our lifeblood. We’re proud both to invest in businesses that can and do support today’s leading music entrepreneurs and to advocate for the policies and practices that are designed to protect and grow the entire music ecosystem.And finally, one of 2024’s announcements of which I am proudest is the formation of our Global Impact Team, whose mission is to enact positive change in our industry and in the communities in which we serve. This cross-functional group of executives brings a deep understanding of our global organization and will develop and execute strategies to tackle a variety of critical issues, including: equality; mental health and wellness; food insecurity and the unhoused; the environment; and education. By dovetailing seamlessly with our goals and those of our artists, we can promote and even catalyze beneficial and authentic changes where they are needed. Recently, in the wake of the terrible Los Angeles wildfires, the Impact Team mobilized, offering support to those affected, activating a multi-pronged relief effort to help both our own employees and the broader L.A. communities.Before I get to what lies ahead for 2025, let me first provide you with some context as to the enviable position UMG holds.UMG is a global creative enterprise at the center of an ecosystem of hundreds of digital partners. And while we’ve consistently been the music industry’s leader since the advent of the streaming era—an era whose dawn we were instrumental in ushering in—the leadership posture among our DSP partners has undergone some significant changes. For example, one of our fastest-growing subscription partners, YouTube, is also one of the most recently launched. We expect more inevitable jockeying for the leadership position among standalone platforms as well as among the music services that are divisions of trillion-dollar valuation tech companies.But, even though we are a relative minnow in comparison to a trillion-dollar tech company, the music of our incredible artists and songwriters enables us to exercise outsized influence on the global stage and serve as a critical catalyst in fostering a truly competitive commercial marketplace for music. We will keep using our position to promote a healthy and sustainable music ecosystem that benefits all artists at all stages of their careers.Now to 2025 … starting with our artist-centric strategy:When we introduced that strategy two years ago, we immediately went to work with our partners to make it a reality. In a matter of months, we reached agreements in principle on a number of issues: increasing the monetization of artists’ music; limiting the gaming of the system by protecting against fraud and content saturation; and focusing on the value of authentic artist-fan relationships, inspiring the development of more engaging consumer experiences, including specially designed new products and premium tiers for superfans. Platforms as diverse as Deezer, Spotify, TikTok, Meta and most recently Amazon, have adopted artist-centric principles in a wide variety of ways—principles that benefit the entire music industry from DIY to independent to major label artists and songwriters.Our work in driving these artist-centric principles will continue in 2025. Not only do we want to ensure that artists are protected and rewarded, but we’re also going after bad actors who are actively engaged in nefarious behavior such as large-scale copyright infringement. To that end, we’re setting forth the best practices that every responsible platform, distributor and aggregator should adopt: content filtering; checks for infringement across streaming and social platforms; penalty systems for repeat infringers; chain-of-custody certification and name-and-likeness verification. If every platform, distributor and aggregator were to adopt these measures and commit to continue to employ the latest technology to thwart bad actors, we would create an environment in which artists will reach more fans, have more economic and creative opportunities, and dramatically diminish the sea of noise and irrelevant content that threatens to drown out artists’ voices.In September, during our Capital Markets Day presentation, I described what would constitute the next era of streaming—Streaming 2.0. Built on a foundation of artist-centric principles, Streaming 2.0 will represent a new age of innovation, consumer segmentation, geographic expansion, greater consumer value and ARPU growth.I’m pleased to report that the Streaming 2.0 era has arrived. We recently announced a new agreement with Amazon that includes many of these elements, and just last week, we announced a multi-year agreement with Spotify. We expect that similar agreements with other major platforms will be coming in the months ahead. In 2025, we’ll also be reaching out in new ways to engage fans. In addition to listening to their favorite artists’ music, fans want to build deeperconnectionsto artists they love. Last year, in accelerating our direct-to-consumer and superfan strategy, we formed a strategic partnership and became an investor in NTWRK and Complex to build a premium live-video shopping platform for superfan culture. This year will see us expanding our product offerings to fans, as we continue to redefine the “merch” category and create superfan collectibles and experiences. Some of this will be done through our current partners and some through our own D2C channels, which we will continue scaling to meet the massive appetite of fans. After years of working to aggressively build a healthy commercial environment for artists and music—one in which we have reached approximately 670 million subscribers—we will be laser-focused in 2025 on continuing to expand the ecosystem and improve its monetization. As we did in 2024, this year we will continue to aggressively grow our presence in high potential markets through organic A&R, artist and label services agreements, and M&A.The work that lies ahead of us will bring challenges, no doubt about that. But we will meet those challenges with pride and a sense of privilege, because no other form of creative expression is more fundamental to human existence than music. By that, of course, I mean real music created by human artists. So let me leave you with this:Some will try to disrupt our business or criticize us. That we know. It comes with being in the most competitive market that music and music-based entertainment has ever seen, and it comes with being the industry’s leader and primary driving force. But our vision and our ability to consistently execute gives us the momentum to continue to succeed and grow. Our global worldview and the internal competition fueled by our entrepreneurial spirit breeds innovation. Our passion for finding new and better ways to bring music to the world will keep us ahead of competitors and new entrants alike. We’ll continue to do what we do because what we do and how we do it is impossible to replicate. Our culture and our people—you—are our superpower.I can’t wait to see and hear what this year brings, and I am thrilled to be on this journey with you.Let’s go!Lucian

The directory business hasn’t changed much in the last 50 years, but a group of young entrepreneurs are looking to shake things up with the help of artificial intelligence.

Promoters and booking agents have long relied on printed and bound phone and email directories for connecting with the tens of thousands of venues that make up the global touring network. Booking a 25-date tour might require contacting more than 100 venues and clubs for calendar availability, creating reliable demand for companies like Pollstar, VenuesNow and groups like the International Association of Venue Managers to print and publish an updated version of their database each year.

Updating these directories can be a huge undertaking, requiring hundreds of phone calls, emails and queries by the publisher’s staff. Entrepreneur Benji Stein, founder of Booking-Agent.IO, believes there is a better way and his new plan could revolutionize the way valuable information, such as professional contact details, is compiled and monetized by publishers.

Trending on Billboard

Booking-Agent.IO’s data comes from regularly scraping the websites of major venues, and then analyzing and rating that data using AI tools. Contacts for each venue are cross-checked in real time against a number of databases including LinkedIn, Hunter.IO and Apollo, he said, and “searches specifically for the talent buyer of that venue, providing users with an email score” to rate the likely accuracy of the lead.

“We started building this tool a number of years ago, before the prevalence of ChatGPT,” Stein said, noting “it’s basically a search engine for talent buyers and for venues,” adding that “it allows you to see venues on a regional basis with information like capacity, upcoming events and social media links.”

Stein said he expects most current Booking-Agent.IO users to fit into the DIY category and are either self-represented or working with artists without a full-time agent. When available, BookingAgent.io also includes contact information for other positions like production manager and marketing director.

Pricing is $49.99 per month for the basic membership, which comes with unlimited searches and 100 contacts per month. Booking-Agent.IO also offers enterprise subscription plans and only charges users for contacts that are accurate.

“If you book a show with the tool, it essentially pays for itself,” he said.

Challenging Pollstar’s dominance and market share seems unlikely in the long-term, but Stein says when it comes to data accuracy, he believes Booking-Agent.IO has a long-term advantage, noting that print directories eventually become outdated and need to be replaced. As users look to purchase a more recent edition of their booking directory, Stein hopes buyers will increasingly seek out an alternative and find Booking-Agent.io.

“We’re very fresh. It’s a new tool,” Stein says. “Up until now, there’s been nothing like it and we’ve gotten really positive feedback from the kind of music industry people that we’ve onboarded.

In case you missed it: Suno has picked up another lawsuit against it.

Before you read any further, go to this link and listen to one or two of the songs to which GEMA licenses rights and compare them to the songs created by the generative music AI software Suno. (You may not know the songs, but you’ll get the idea either way.) They are among the works over which GEMA, the German PRO, is suing Suno. And while those examples are selected to make a point, based on significant testing of AI prompts, the similarities are remarkable.

Suno has never said whether it trained its AI software on copyrighted works, but the obvious similarities seem to suggest that it did. (Suno did not respond to a request for comment.) What are the odds that artificial intelligence would independently come up with “Mambo No. 5,” as opposed to No. 4 or No. 6, plus refer to little bits of “Monica in my life” and “Erica by my side?”

“We were surprised how obvious it was,” GEMA CEO Tobias Holzmüller tells Billboard, referring to the music Suno generated. “So we’re using the output as evidence that the original works were elements of the training data set.” That’s only part of the case: GEMA is also suing over the similarities between the AI-created songs and the originals. (While songs created entirely by AI cannot be copyrighted, they can infringe on existing works.) “If a person would claim to have written these [songs that Suno output], he would immediately be sued, and that’s what’s happening here.”

Trending on Billboard

Although the RIAA is also suing Suno, as well as Udio, this is the biggest case that involves compositions, as opposed to recordings — and it could set a precedent for the European Union. (U.S. PROs would not have the same standing to sue, since they hold different rights.) It will proceed differently from the RIAA case, which involves higher damages, and of course different laws, so Holzmüller explained the case to Billboard — as well as how it could unfold and what’s at stake. “We just want our members to be compensated,” Holzmüller says, “and we want to make sure that what comes out of the model is not blatantly plagiarizing works they have written.”

When did you start thinking about bringing a case like this?

We got the idea the moment that services like Suno and Udio hit the market and we saw how easy it was to generate music and how similar some of it sounds. Then it took us about six months to prepare the case and gather the evidence.

Your legal complaint is not yet public, so can you explain what you are suing over?

The case is based on two kinds of copyright infringement. Obviously, one is the training of the AI model on the material that our members write and the processing operations when generating output. There are a ton of legal questions about that, but I think we will be able to demonstrate without any reasonable doubt that if the output songs are so similar [to original songs] it’s unlikely that the model has not been trained on them. The other side is the output. Those songs are so close to preexisting songs, that it would constitute copyright infringement.

What’s the most important legal issue on the input side?

The text and data-mining exception in the Directive [on Copyright in the Digital Single Market, from 2019]. There is some controversy over whether this exception was intended to allow the training of AI models. Assuming that it was, it allows rights holders to opt out, and we opted out our entire membership. There could also be time and territoriality issues [in terms of where and when the original works were copied].

How does this work in terms of rights and jurisdiction?

On the basis of our membership agreement, we hold rights for reproduction and communication to the public, and in particular for use for AI purposes. As far as jurisdiction, if the infringement takes place in a given territory, you can sue there — you just have to serve the complaint in the country where the infringing company is domiciled. As a U.S. company, if you’re violating copyright in the EU, you are subject to EU jurisdiction.

In the U.S., these cases can come with statutory damages, which can run to $150,000 per work infringed in cases of willful infringement. Is there an amount you’re asking for in this complaint?

We want to stake out the principle and stop this type of infringement. There could be statutory damages, but the level has to be calculated, and there are different standards to do that, at a later stage [in the case].

Our longterm goal is to establish a system where AI companies that train their models on our members’ works seek a license from us and our members can participate in the revenues that they create. We published a licensing model earlier this year and we have had conversations with other services in the market that we want to license, but as long as there are unlicensed services, it’s hard for them to compete. This is about creating a level playing field

How have other rightsholders reacted to this case?

Nothing but support, and a lot of questions about how we did it. Especially in the indie community, there’s a sense that we can only discuss sustainable licenses if we stand up against unauthorized use.

The AI-created works you posted online as examples are extremely similar to well-known songs to which you hold rights. But I assume those didn’t come up automatically. How much did you have to experiment with different prompts to get those results?

We tried different songs, and we tried the same songs a few times and it turned out that for some songs it was a similar outcome every time and for other songs the difference in output was much greater.

These results are much more similar to the original works than what the RIAA found for its lawsuits against Suno and Udio, and I assume the lawyers on those cases worked very hard. Do you think the algorithms work differently in Germany or for German compositions?

I don’t know. We were surprised ourselves. Only a person who can explain how the model works would be able to answer that.

Tell me a bit about the model license you mentioned.

We think a sustainable license has two pillars. Rightsholders should be compensated for the use of their works in training and building a model. And when an AI creates output that competes with input [original works], a license needs to ensure that original rightsholders receive a fair share of whatever value is generated.

But how would you go about attributing the revenue from AI-created works to creators? It’s hard to tell how much an AI relies on any given work when it creates a new one.

Attribution is one of the big questions. My personal view is that we may never be able to attribute the output to specific works that have been input, so distribution can only be done by proxy or by funding ways to allow the next generation of songwriters to develop in those genres. And we think PROs should be part of the picture when we talk about licensing solutions.

What’s the next step in this case procedurally?

It will take some time until the complaint is served [to Suno in the U.S.], and then the defendant will appoint an attorney in Munich, the parties will exchange briefs, and there will be an oral hearing late this year or early next year. Potentially, once there is a decision in the regional court, it could go [to the higher court, roughly equivalent to a U.S. appellate court]. It could even go to the highest civil court or, if matters of European rights are concerned, even to the European Court of Justice [in Luxembourg].

That sounds like it’s going to take a while. Are you concerned that the legal process moves so much slower than technology?

I wish we had a quicker process to clarify these legal issues, but that shouldn’t stop us. It would be very unfortunate if this race for AI would trigger a race to the bottom in terms of protection of content for training.

A new federal report on artificial intelligence says that merely prompting a computer to write a song isn’t enough to secure a copyright on the resulting track — but that using AI as a “brainstorming tool” or to assist in a recording studio would be fair game.

In a long-awaited report issued Wednesday (Jan. 29), the U.S. Copyright Office reiterated the agency’s basic stance on legal protections for AI-generated works: That only human authors are eligible for copyrights, but that material created with the assistance of AI can qualify on a case-by-case basis.

Amid the surging growth of AI technology over the past two years, the question of copyright coverage for outputs has loomed large for the nascent industry, since works that aren’t protected by copyrights would be far harder for their creators to monetize.

Trending on Billboard

“Where that [human] creativity is expressed through the use of AI systems, it continues to enjoy protection,” said Shira Perlmutter, Register of Copyrights, in the report. “Extending protection to material whose expressive elements are determined by a machine, however, would undermine rather than further the constitutional goals of copyright.”

Simply using a written prompt to order an AI model to spit out an entire song or other work would fail that test, the Copyright Office said. The report directly quoted from a comment submitted by Universal Music Group, which likened that scenario to “someone who tells a musician friend to ‘write me a pretty love song in a major key’ and then falsely claims co-ownership.”

“Prompts alone do not provide sufficient human control to make users of an AI system the authors of the output,” the agency wrote. “Prompts essentially function as instructions that convey unprotectible ideas.”

But the agency also made clear that using AI to help create new works would not automatically void copyright protection — and that when AI “functions as an assistive tool” that helps a person express themselves, the final output would “in many circumstances” still be protected.

“There is an important distinction between using AI as a tool to assist in the creation of works and using AI as a stand-in for human creativity,” the Office wrote.

To make that point, the report cited specific examples that would likely be fair game, including Hollywood studios using AI-powered tech to “de-age” actors in movies. The report also said AI could be used as a “brainstorming tool,” quoting from a Recording Academy submission that said artists are currently using AI to “assist them in creating new music.”

“In these cases, the user appears to be prompting a generative AI system and referencing, but not incorporating, the output in the development of her own work of authorship,” the agency wrote. “Using AI in this way should not affect the copyrightability of the resulting human-authored work.”

Wednesday’s report, like previous statements from the Copyright Office on AI, offered broad guidance but avoided hard-and-fast rules. Songs and other works that use AI will require “case-by-case determinations,” the agency said, as to whether they “reflect sufficient human contribution” to merit copyright protection. The exact legal framework for deciding such cases was not laid out in the report.

The new study on copyrightability is the second of three studies the agency is conducting on AI. The first report, issued last year, recommended federal legislation banning the use of AI to create fake replicas of real people; bills that would do so are pending before Congress.

The final report, set for release at some point in the future, deals with the biggest AI legal question of all: whether AI companies break the law when they “train” their models on vast quantities of copyrighted works. That question — which could implicate trillions of dollars in damages and exert a profound effect on future AI development — is already the subject of widespread litigation.

Perplexity AI has presented a new proposal to TikTok’s parent company that would allow the U.S. government to own up to 50% of a new entity that merges Perplexity with TikTok’s U.S. business, according to a person familiar with the matter.

The proposal, submitted last week, is a revision of a prior plan the artificial intelligence startup had presented to TikTok’s parent ByteDance on Jan. 18, a day before the law that bans TikTok went into effect.

The first proposal, which ByteDance hasn’t responded to, sought to create a new structure that would merge San Francisco-based Perplexity with TikTok’s U.S. business and include investments from other investors.

Trending on Billboard

The new proposal would allow the U.S. government to own up to half of that new structure once it makes an initial public offering of at least $300 billion, said the person, who was not authorized to speak about the proposal. The person said Perplexity’s proposal was revised based off of feedback from the Trump administration.

If the plan is successful, the shares owned by the government would not have voting power, the person said. The government also would not get a seat on the new company’s board.

ByteDance and TikTok did not immediately responded to a request for comment.

Under the plan, ByteDance would not have to completely cut ties with TikTok, a favorable outcome for its investors. But it would have to allow a “full U.S. board control,” the person said.

Under the proposal, the China-based tech company would contribute TikTok’s U.S. business without the proprietary algorithm that fuels what users see on the app, according to a document seen by the Associated Press. In exchange, ByteDance’s existing investors will get equity in the new structure that emerges.

The proposal seems to mirror a strategy Steven Mnuchin, treasury secretary during Trump’s first term, discussed Sunday on Fox News’ Sunday Morning Futures — that a new investor in TikTok could simply “dilute down” the Chinese ownership and satisfy the law. Mnuchin has previously expressed interest in investing in the company.

“But the technology needs to be disconnected from China,” he added. “It needs to be disconnected from ByteDance. There’s absolutely no way that China would ever let us have something like that in China.”

The Perplexity proposal comes as several investors are expressing interest in TikTok. President Donald Trump said late Saturday that he expects a deal will be made in as soon as 30 days.

On a flight from Las Vegas to Miami on Air Force One, Trump also said he hadn’t discussed a deal with Larry Ellison, CEO of software maker Oracle, despite a report that Oracle, along with outside investors, was considering taking over TikTok’s global operation.

“Numerous people are talking to me. Very substantial people,” Trump said. “We have a lot of interest in it, and the United States will be a big beneficiary. … I’d only do it if the United States benefits.”

Under a bipartisan law passed last year, TikTok was to be banned in the United States by Jan. 19 if it did not cut ties with ByteDance. The Supreme Court upheld the law, but Trump then issued an executive order to halt enforcement of the law for 75 days.

Trump, on Air Force One, noted that Ellison lives “right down the road” from his Mar-a-Lago estate, but added, “I never spoke to Larry about TikTok. I’ve spoken to many people about TikTok and there’s great interest in TikTok.”

TikTok briefly shut down in the U.S. a week ago, but went back online after Trump said he would postpone the ban. Trump had unsuccessfully attempted a U.S. ban of the platform during his first term. But he has since reversed his position and has credited the platform with helping him win more young voters during last year’s presidential election.

TikTok CEO Shou Chew attended Trump’s inauguration Jan. 20, along with some other tech leaders who’ve been forging friendlier ties with the new administration.

Congress voted to ban TikTok in the U.S. out of concern that TikTok’s ownership structure represented a security risk. The Biden administration argued in court for months that it was too much of a risk to allow a Chinese company to control the algorithm that fuels what people see on the app. Officials also raised concerns about user data collected on the platform.

However, to date, the U.S. hasn’t provided public evidence of TikTok handing user data to Chinese authorities or allowing them to tinker with its algorithm.

Paul McCartney is speaking out against proposed changes to copyright laws, warning that artificial intelligence could harm artists.

The British government is currently considering a policy that would allow tech companies to use creators’ works to train AI models unless creators specifically opt out. In an interview with the BBC, set to air on Sunday (Jan. 26), the 82-year-old former Beatle cautioned that the proposal could “rip off” artists and lead to a “loss of creativity.”

“You get young guys, girls, coming up, and they write a beautiful song, and they don’t own it, and they don’t have anything to do with it. And anyone who wants can just rip it off,” McCartney said. “The truth is, the money’s going somewhere… Somebody’s getting paid, so why shouldn’t it be the guy who sat down and wrote ‘Yesterday’?”

The U.K. Labour Party government has expressed its ambition to make Britain a global leader in AI. In December 2024, the government launched a consultation to explore how copyright law can “enable creators and right holders to exercise control over, and seek remuneration for, the use of their works for AI training” while also ensuring “AI developers have easy access to a broad range of high-quality creative content,” according to the Associated Press.

Trending on Billboard

“We’re the people, you’re the government. You’re supposed to protect us. That’s your job,” McCartney told the BBC. “So you know, if you’re putting through a bill, make sure you protect the creative thinkers, the creative artists, or you’re not going to have them.”

The Beatles’ final song, “Now and Then,” released in 2023, utilized a form of AI called “stem separation” to help surviving members McCartney and Ringo Starr clean up a 60-year-old, low-fidelity demo recorded by John Lennon, making it suitable for a finished master recording.

As AI becomes more prevalent in entertainment, music and daily life, the debate around its impact continues to grow. In April 2024, Billie Eilish, Pearl Jam and Nicki Minaj were among 200 signatories of an open letter directed at tech companies, digital service providers and AI developers. The letter criticized irresponsible AI practices, calling it an “assault on human creativity” that “must be stopped.”

Deezer, a leading French streaming service, says that roughly 10,000 fully AI-generated tracks are being delivered to the platform every day, equating to about 10% of its daily music delivery.

This finding emerged from an AI detection tool Deezer developed and filed two patent applications for in December, the company says. Now, the service is developing a tagging system for the fully AI-generated works it detects in order to remove them from its algorithmic and editorial recommendations and boost human-made music.

According to a press release, Deezer’s new tool can detect AI-made music from several popular AI music models, including Suno and Udio, with plans to further expand its capabilities. The company notes that the tool could eventually be trained to detect from “practically any other similar [AI model]” as long as Deezer can gain access to samples from those models — though the company also says it has “made significant progress in creating a system with increased generalizability, to detect AI generated content without a specific dataset to train on.” It has additional plans to develop the capability to identify deepfaked voices.

Trending on Billboard

Deezer is the first music streaming platform to announce the launch of an AI detection tool and the first to seek a concrete solution for the growing pool of AI music accumulating on streaming platforms worldwide. Given that AI-generated music can be made much more quickly than human-created works, critics have expressed concern that these AI tracks will increasingly take away money and editorial opportunities from human artists on streaming services.

Among those critics are the three major music companies — Universal Music Group, Sony Music Entertainment and Warner Music Group — which collectively sued top AI music models Suno and Udio for copyright infringement last summer. In their complaint, the three companies said Suno and Udio could generate music that would “saturate the market with machine-generated content that will directly compete with, cheapen and ultimately drown out the genuine sound recordings on which [the services were] built.”

“As artificial intelligence continues to increasingly disrupt the music ecosystem, with a growing amount of AI content flooding streaming platforms like Deezer, we are proud to have developed a cutting-edge tool that will increase transparency for creators and fans alike,” says Alexis Lanternier, CEO of Deezer. “Generative AI has the potential to positively impact music creation and consumption, but its use must be guided by responsibility and care in order to safeguard the rights and revenues of artists and songwriters. Going forward we aim to develop a tagging system for fully AI generated content, and exclude it from algorithmic and editorial recommendation.“

“We set out to create the best AI detection tool on the market, and we have made incredible progress in just one year,” says Aurelien Herault, Deezer’s chief innovation officer. “Tools that are on the market today can be highly effective as long as they are trained on data sets from a specific generative AI model, but the detection rate drastically decreases as soon as the tool is subjected to a new model or new data. We have addressed this challenge and created a tool that is significantly more robust and applicable to multiple models.”

State Champ Radio

State Champ Radio